10 Cool Facts About The Invention of the Computer

The computer defines our modern way of life. For most of us work involves sitting behind or interacting with a computer of some sort; even children use them to do research and type up school projects.

We give little thought to the amazing computers that fuel our modern way of life (except to complain when they are too slow) but they are unbelievably fascinating pieces of equipment. Here is a list of 10 cool facts that you never knew (but really should) about the invention of the computer.

10. The word ‘Computer’ originally referred to a person not to a machine

These days when we refer to a ‘computer’ everyone knows that we are talking about a machine. When the term was originally coined, however, it meant something rather different. In 1631 the verb ‘to compute’ was recorded as being used for the very first time. In the verb form it means to make a calculation. As is so often the case a verb can lead to a descriptive noun and a few years later, in 1646 we see the first recorded use of the noun ‘computer’ when it was used to refer to someone who is able to make mathematical calculations, or someone who was able ‘to compute’. It was only in the 20th century that the word came gradually to be associated with an electronic computing machine. Gradually these machines were modified to be able to do more than make mathematical computations, giving rise to the modern day computer.

9. Mechanical computing devices have been in use for thousands of years

Prior to the invention of the computer as we know it our ancestors relied on a range of alternative methods of calculation.

The ancient Babylonians used a form of abacus from around 2400BC and it became (and still is) a popular form of calculating mechanism in the Far East. The Chinese developed a number of techniques for using the abacus which allowed them to perform complex calculations including multiplication, division, square roots and even cube roots.

Abaci are, however, relatively simple counting devices. The oldest analog computer that has been discovered dates to 100 BC and was discovered on the Antikythera shipwreck. Believed to date back to 100BC this Antikythera Mechanism is thought to have been used to calculate astronomical positions, a complex mechanical astrolabe that tracks the movements of the solar system. It was the most complex machine of its time, so much so that nothing approaching that level of complexity is known about in the subsequent 1000 years.

By the 1600s people were starting to rely on the Slide Rule to assist them in making difficult calculations including logarithms, reciprocals and trigonometry. These devices are so reliable that they were still in common use as late as the 1960s and 70s (some fields, such as aviation, still use a modified slide rule today).

All these devices were used to assist the operators to make mathematical calculations but modern computers do so much more. Indeed the most common daily use of a computer is as a typewriter/word processor and tool for communicating on social media. This function too has its ancient antecedents. In 1770, more than 200 years before Twitter took the world by storm a watchmaker from Switzerland, Pierre Jaquet Droz build a doll that could be programmed to write short messages (40 letters) long using a quill and ink.

Most people, prior to the invention of the computer and calculator had to rely on a combination of a slide rule and printed mathematical tables which were often full of mistakes. Calculations took a long time and had to be double and triple checked.

8. Charles Babbage invented the first modern computer in 1821

By the 1800s the complexities of the increasing complexity of the calculations required in daily life were leading to people becoming frustrated with the limitations of tables and other available computing devices. In around 1820 an Englishman called Charles Babbage was tasked with making some improvements to the tables used in the Nautical Almanac. Frustrated with the length of time the process, with its requisite delays for double checking, took Babbage started to wonder whether the calculations could instead be performed by a steam driven machine. By 1822 he had devised a mechanical calculating machine which he called a ‘difference engine’. The government funded him to develop the engine but the project was suspended in the 1830s, by that time all of the relevant parts of the calculating mechanism had been made but it had not been assembled. If it had been it would have weighed more than 2 tons!

In the process of researching his differential engine Babbage realized that the principals could be applied to much more than simple navigational computations and went on to design his analytical engine. This machine was designed to use the punch cards then commonly in use on weaving looms (and similar to those used in the early computers of the 1900s). It had a number of features including a logic unit, conditional branching and integrated memory that make this machine the first ever modern computer. The machine even had its own printer. Nothing like it would be developed for another century; it was ahead of its time and, indeed, the development was hampered by the fact that all the parts had to be made by hand.

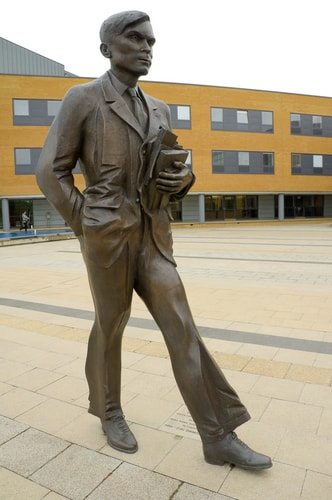

7. The concept of what was to become the modern computer was defined in 1936

In 1936 Alan Turing developed the idea of the modern computer by introducing the concept of the ‘universal machine’ that could compute anything that is computable and was capable of being fully programmed.

The US Navy was interested in using computers to calculate the trajectory of torpedoes fired from submarines in order to make them more accurate. They developed the Torpedo Data Computer. This integrated firing system allowed the torpedoes to track and aim at their target on a continuous basis and was more sophisticated than any other firing system at the time. It relied on a system of electrical switches to drive mechanical relays.

In Germany computer pioneer Konrad Zuse developed computers that relied on the binary system of calculation (Babbage’s computers had operated on a decimal system). His computers were the first to be fully programmable and had separate storage. His first computers were destroyed in allied bombing raids on Berlin but Zuse was undeterred. In 1945 he developed Plankalkul, the first programming language and in 1946 he founded the world’s first computer company, raising funding through an IBM option on his patents.

6. World War II was a major catalyst for computer development

One of the biggest challenges of the War was to crack the German ‘Enigma’ codes that directed the U Boat operations against the allies. British operation at the secure Bletchley Park famously cracked the codes. Once this had been done thought turned to the possibility of cracking the even more sophisticated ‘Tunny’ communications that carried high level army intelligence. Tommy Flowers, who had been instrumental in converting telephone exchanges to electronic networks, was brought in to construct a machine that could run the decryption.

Flowers designed Colossus which was the first electronic digital programmable computer in the world. It was moved to Bletchley Park in 1943 and immediately set to work on breaking the encryptions.

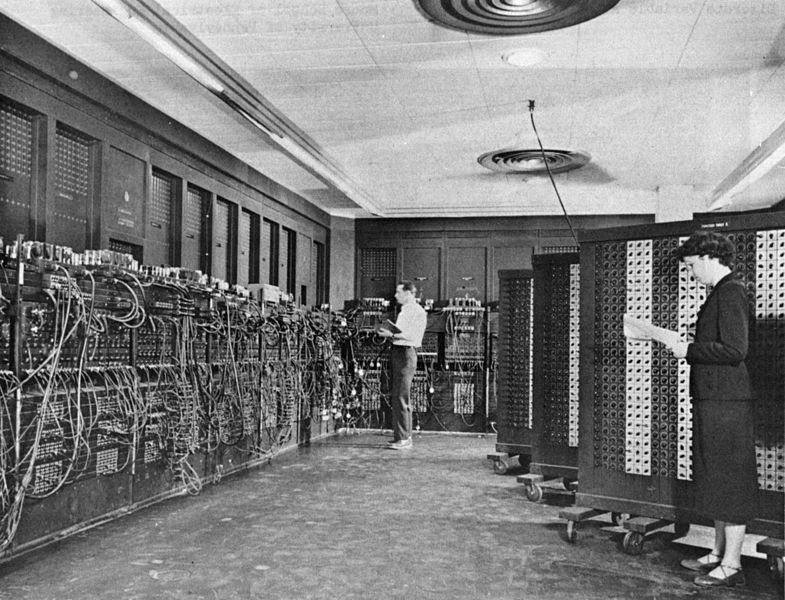

On the other side of the Atlantic the first programmable computer built in the US was the ENIAC or Electronic Numerical Integrator and Computer. The programming was done by setting the cables and switches in a defined manner, once that program was complete the cables had to be reset. The machine was used to program ballistics trajectories, it weighed in excess of 30 tons and used over 18,000 vacuum tubes.

Both machines were kept very secret during the war, at the cessation of hostilities, however, the existence of ENIAC was made public while Colossus was kept secret, (presumably to protect British encrypted messages). Flowers was unable to garner any public acclaim for his much more sophisticated machine which had been in operation 2 years before the US version. All but two of the machines were destroyed with the remaining Colossi being used for training.

Colossus and ENIAC remain important in the history of computing, not just because of their contribution to the allied victory against tyranny but also because they established, once and for all, that large scale computers were not only possible but practical.