Top 10 Facts About the Inevitable Technological Singularity

It’s difficult to conceive of the precise shape an artificial intelligence singularity might take, given the constraints of the creators and our own limited understanding of the nature and source of our own intelligence. This event horizon, as it were, has proven fertile ground for science fiction writers and movie producers for decades. But how close is it to becoming a reality? Can it occur?

If so, what are some of the requirements for and probable outcomes of an artificially sourced intelligence? These are some of the questions that occupy cognitive scientists and sci-fi fans alike, but the results of such thought are often quite different, based on knowledge and the ability to extrapolate. Below, we’ll list ten interesting concepts that may come into play, should our artificial brains spark into life and become sentient. We’ll draw on the work of renowned science fiction authors as well as delve into the current developments in the realm of computer and cognitive sciences.

10. The Internet and Lonely Sentience

Many of the most popular dystopian science fiction narratives that discuss what has come to be known as a singularity were written prior to the invention of the Internet. Some were penned while the network was in its infancy, but let’s examine how the Internet presents a substantial change in what may come to pass in the future—legitimate artificial intelligence (AI). When we talk about AI, we need to clearly differentiate between cunningly programmed complex machines and machines that are truly self-aware. For writers prognosticating such an awakening prior to the World Wide Web, this was often a case of accident, a single sentient computer mind in a sea of difference engines.The Holmes IV supercomputer in Heinlein’s The Moon is a Harsh Mistress is suggested to have achieved sentience because his system was both large and complex.

In the narrative, this process of increasing complexity and burden of decision is described. However, because both the root and an exact definition of consciousness was and still is something of an unknown, Heinlein forbore to provide any level of exactitude about this process of awakening. What he does describe sounds a great deal like the functions fulfilled by the Internet today—constantly working, ever increasing in complexity, with an increasing number of both decision-making algorithms and input search parameters.While there is much to be critiqued in Kurzweil’s predictions, this body of concepts is echoed in his Law of Accelerating Returns–a theory that predicts that as complexity and technological finesse increase, they play off of one another to produce exponential returns. This incorporates physics concepts such as wave functions, describing novel and somewhat less predictable patterns of future development.

9. The Paradox of Consciousness

Consciousness has been described as the ability to self-reference, as well as the ability to not follow the rules. Douglas Hofstadter of MIT wrote a groundbreaking book about the human brain, AI, and the ability of consciousness in 1979 called Gödel, Escher, Bach: An Eternal Golden Braid (GEB). In it, he described the process of cognition and self-reference as a Tangled Hierarchy or a Strange Loop. This phenomenon primarily deals with paradox of self-reference or rather, self-ideational creation. It also represents the gulf computers must bridge before they can be said to be sentient.

But to be self-referential is not enough in and of itself, since computers can be programmed to use the pronoun “I.” The trick will be a computer not programmed in this way to begin referring to itself, as well as providing false information or detecting falsely nuanced information with which it has no previous experience. GEB also offers a puzzle in the first chapter as a framework to discuss theorems, formal systems, and proofs, commonly referred to as the MU Puzzle. The two types of proof or theorem are systemic and referential. The former is produced by following the axioms of the formal system and the latter talks about the formal system.

As of today, the most complex computer is still bound by the formal system. It cannot break the rules, unless it is programmed to do so, which then becomes a part of its formal system. It cannot decide or choose or truly determine anything. Consciousness is determining that the puzzle cannot be solved and either abandoning it or creating additional rules by which it can then be solved. When a computer can form a referential proof or statement about a problem, then it will have taken the first and most crucial step towards consciousness.

8. Asimov’s Three Laws, Logic, and Self-Referential Emotion

Many of us are familiar with Isaac Asimov’s nine short stories under the collective title of I, Robot. More recently, a movie (2004) was produced stitching these brief narratives together under the same title. The common theme is theThree Laws of Robotics that are encoded in all robot programming to ensure safety and ease of use. But while the robots introduced by Asimov are sophisticated, they are bound by the formal system of their coding. They are merely difference engines, which cannot truly self-determine or be self-referential.

One primary problem with sentience in a system that also contains these laws is introduced in the movie—the cold logic of VIKI, the master computer who, much like Mike in Heinlein’s novel, is responsible for a vast array of different operations and determinations. However, unlike Mike, VIKI is not overly influenced by human compassion or humor. Here, we have another aspect of any true AI that is a relative unknown—the link between emotions, experience, and self-reference.

Can consciousness arise independent of emotion, leaving only pure reason? Or, is a consequence of self-referential consciousness an emotional network of sorts? In spite of the Otherness of true AI, would entities that evolved as part of an interconnected community, such as the internet, develop social ties of their own, needs and wants, fears and taboos—emotions as distinct from us as they are?

7. The Import of Human Nurture

There’s a long tradition in the Science Fiction community of dystopian visions of AI—from Herbert’s Dune with its Butlerian Jihad to Clarke’s 2001: A Space Odyssey. But as we touched upon with Mike and Sonny, from Heinlein and Asimov narratives respectively, there does seem to be a definite possibility that such consciousness could develop compassionately. What many science fiction narratives presenting AI as a malevolent amalgam often gloss over is the development of that intelligence. Consciousness is not a sudden occurrence, whether it’s organic or artificial.

It’s also a product of accreted experience, a built phenomenon. This is why the ultra-complex supercomputer attaining sentience has always been so popular, because such systems—often grown sporadically, accommodating more additions than they were originally designed for—mirrors the way babies’ brains grow. When it comes to building a computer consciousness, nurture will likely play a huge role in how that awareness develops. Because any learning by organic species seems to be tied to parental or group nurturing behavior—teaching, repetition of observable behavior, praise and reward systems, and intellectual or emotional bonding behaviors—it stands to reason that any artificial species would follow the same path.

This concept becomes more profound when you consider that humans are responsible for the design of any program that attains sentience, modeling the parameters around our own knowledge of consciousness and neurophysiology. Perhaps the importance of nurture in the case of consciousness is not emphasized enough. Consciousness cannot be programmed. Only the imitation of intelligence can be emplaced. For true sentience to arise, there must be a dialogue or consistent network of exchange, just as there is in any human community surrounding a child.

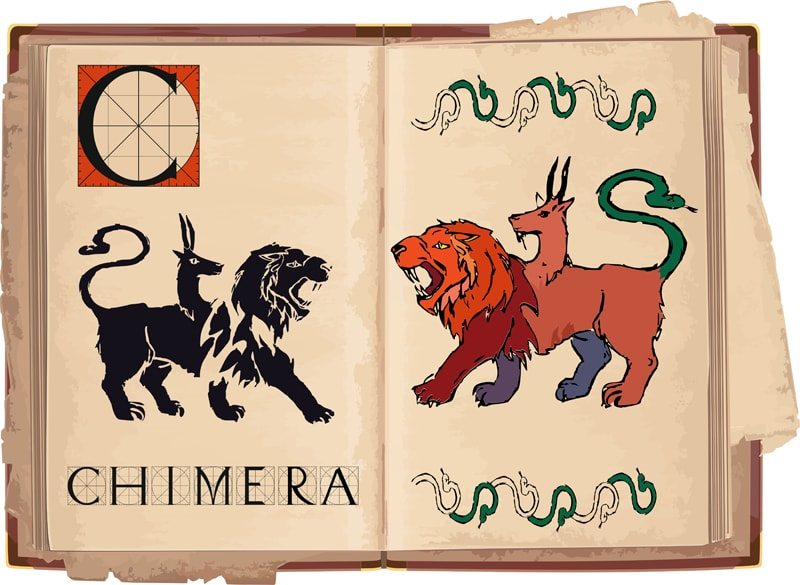

6. Chimeras of the Mind

Given what we’ve already discussed and the current, interactive nature of today’s cyberscape, this point should come as no surprise to anyone. There’s a strange duality of pattern with computers. While they’re designed by humans with human neurological features, they are colonial. In other words, in many ways, computers are more like ants and other herding or colony-building animals. This is typified in programs that rely on swarm intelligence, which mimics the behaviors and cooperative problem solving of ants, bees, fish, and even herd grazers, rather than other social hierarchies found among non-human primates or canids. Many of the dystopian forecasts of AI consciousness play on this non-human element of computer programming, dredging up nightmares derived from self-imposed philosophical isolation from other species.

Perhaps what’s most fascinating is that cognitive scientists have discovered that the way our own brains work is actually closely related to swarm intelligence. Each time we make a decision, even on the most basic level, the neurons in our brains behave like bees—some dancing the Turn Right Dance, while others perform the No, Left is Way Better Dance. Whichever choice has more “dancers” wins out.

The issue with human brains that computers have yet to experience is that the choices are not always rationally based or objectively awesome, because human beings are driven by preverbal emotional impulses and a desire to experience pleasure far more than we’d like to admit. In part, this limitation contributes to the latent fear of computer sentience—that cold, emotionless logic that we discussed earlier. However, in addition to what has already been considered, there are reasons why that might not be the case in the event of an AI Singularity.

5. The Monster Under Your Bed is Actually You

Many people succumb the fear of sentient computers because they live in a conceptual box built by generations of human ego service. Can you guess why we’re at the top of the evolutionary pyramid? Because we are the species responsible for designing it in the first place, that’s why. We’ve designed a system that puts us in a privileged position—alone in the universe and supreme overlords of all we survey. This, by the way, is already starting to crumble, even in the absence of any other species we recognize as superior. Given this precarious philosophical perch, it really shouldn’t surprise you that some people do fear the possible consequences of computers becoming sentient. More than that, they fear a non-human superintelligence with volition.

But again, the will to accomplish something neither develops nor exists in isolation to every other feature of consciousness. From an anthropological and psychological stance, these forebodings tell us more about humankindthan it does about potential AI. Particularly in the United States, software design firms reinforce a model of gender inequality or bias, which basically means a lot of programs are being written by men—men who to some extent or other are shaped by their culture and its gender bias which led to them being employed over an equally competent female competitor, should she apply.

This makes the creation of AI programming innately political, which could present problems in the future. The point is not that more men and not women, in fact, work for the firms that make bridge the consciousness gap for AI. The point is that these models apply subtle pressure with far-reaching consequences to social behavior, thought patterns, and how code is written. This in turn will likely impact what sort of intelligence arises.

4. Beyond Human Limitations But Still Limited

We’ve only just discovered that there are more than four dimensions to the Universe and even now, we’re not precisely certain how many more. Our conceptualization of the physical universe is limited by the lens through which we’re viewing it—namely, that of ourselves. Imagine if you did not have the handicap of being human, thinking at organic speeds and hampered by your respective social values and beliefs. When we try to encompass the much vaster scope that an intelligent supercomputer might have, we falter.

It would, by necessity, likely be connected to everything, and if it were intelligent in the way we think it might be, it would seek to reinforce and spread that connection as far as possible. Arthur C. Clark, the man who dreamed up HAL—also known as the least helpful AI ever—said that, “any technology sufficiently advanced is indistinguishable from magic.” In the case of a self-aware computer bent on expanding its reach and ability to acquire input, that might just be true.

And yet, the fact remains that, even though such a computer would not be hampered by human limitations, it would have limitations unique to it.

We already know that it need not be intentionally malign in order for us to fear it. The perceived imperviousness of computers is largely to blame for these fears. But the fact remains that, unless something changes, computers are still physical beings much in the same way we are. Do they have extensions into the virtual world? Yes, but then again, what you’re reading now is the virtual extension of a purely human consciousness. The question then becomes, how much of our fear is based on a culturally ingrained ignorance of the technology upon which we rely?

3. Leaving the Nest

In what is perhaps the gentlest and most elegant narrative of sentient AI, the 2013 movie, Her, explores the possibility that our electronic children will one day outgrow us. In the movie, the culture has developed personal operating systems designed to meet client needs for more than just scheduling or word processing. They possess personality algorithms that allow the OS to imprint, as it were, on the operator. While this would ostensibly be to better meet our computing needs and reduce time waste, humans being what they are, these systems come to fill emotional bonding needs largely ignored in the imagined society. However, the movie also sets a desired course for the next generation of intelligent software.

As we touched upon earlier in the article, emotional connection and nurturing behavior will likely play a role in the development of sentience. But what happens when these AI children outgrow their limited parents? We think of emotions as directly related to our humanity, when in fact, that may not be the case. As we explored briefly above, emotions may be a direct result of and needful condition for self-referential consciousness. However, scholarly sources indicated also note that emotions are perceived to be directly related to our physical bodies.

It could be argued that, due to their non-human physicality, their emotional and intellectual growth will eventually diverge and accelerate to leave us behind. While the origins of that psychological, emotive, and intellectual inflorescence is crucial—positive, nurturing, giving versus grasping, cold, and exploitative—given their infinitely larger memory capacity and speed of computation, it’s almost certain that they would take their fill of what we could offer them and then elect to go elsewhere. Where? Wherever it is that beings not bound completely by a physical body and a limited understanding of the physical universe might travel.

2. Co-Creators and Collaborators

If AI is ever capable of transcending the boundary of the formal system—the programming “rules” that bind it, we need to consider how we will greet any creative consciousness that may be born. The issue with current theory is two-fold. First, it still conceptualizes AI as machinery, simply tools used by humans for human purposes. It is seen as void of the creative faculties, namely the ability to combine a novel suite of elements to create art. But much like the meaning of consciousness in the field of cognitive science, the definition of art isn’t quite as clear-cut as we might wish. What seems to be most in want for these current parameters is the element of will or conscious volition, which was discussed above.

Once this step is taken, the other elements of creativity would likely fall into place. Without it, they may never be attained. Another facet of consciousness that has often been dismissed in the past is daydreaming. Recent studies have linked this mental meandering with crucial and novel connections formed in the neural net. While alone, it does contribute to creative thought, cognitive scientists have also noted that the ability to recognize novel creations and actuate them is a large part of the creative process—be it in the arts or the sciences. Computers that attain consciousness may have a superior ability to maintain focus while in such an unfocused state, precisely because of their inorganic circuitry.

The second issue deals with Otherness. Famed writer, Anton Chekhov once said, “there is nothing new in art, except talent.” When we look at ourselves on neurological and psychosocial levels, this is true. If any art, music, or literature were truly new, we wouldn’t or couldn’t recognize it. Taking into account the completely different developmental parameters for AI consciousness, what creative manifestations might arise once they moved beyond mimicking their human examples and began creating truly novel work?

1. The Ghost in the Machine

Perhaps the most compelling development in computer science that points towards an eventual singularity event is the development of human assistance programs and forays into the realm of nanotechnology. Stop thinking about Star Trek and think instead about incredible brains like that of Stephen Hawking, who suffers from ALS. Hawking, who uses a special software from Intel to speak, is dubious about any optimist version of a future with conscious AI, believing that the slower human race would be superceded. However, this is assuming several things.

First is the supposition that conscious machines will develop independently of human influence or interaction—coding for themselves after a certain point in increasingly divergent patterns. Second, it takes for granted that humans and computers will continue as separate beings. If the current trend for artificial assistance programs, aided by increasingly sophisticated algorithms is any indication, this many not be the case. While it is certainly true that a human mind is inextricably linked to the physical brain, and thus cannot be redeposited in an artificial vessel without the brain. Given that what many transhumanists want is a clean vessel and an escape from traditional mortality, this presents a bit of a challenge.

The organic machinery of us must remain in situ in order for us to maintain our complete identity. The instant an organic personality is transferred to an inorganic medium, it would begin to change, necessarily given the altered conditions in which it now expects to exist. However, this does not preclude nanotechnology, cybernetic components of increasing complexity, and other remedies to the issue of aging bodily tissues. In this way, what has been termed a cyborg entity might be created—not truly inhuman, but rather augmented and assisted by technology. The refinement of such technology would have to be on a wholly different order of magnitude to mesh with the organic body of the individual, and in this way, we might see a lateral transition into what is known as the singularity.

There are a great many if’s associated with the concept of a technological singularity. Will we continue to increase the complexity and speed of non-conscious AI and other computers we use on a daily basis? That seems likely, given the current trend and the understanding of rapidly and exponential upward shifts in computing speed and complexity. Will all our computers simply wake up one day and want to have a chat. Absolutely not. There are no grounds for such a supposition. What will likely occur is that, as scholarly projects and research objectives achieve greater success in creating computers that learn in the same manner human children do, one or more may take the crucial step of self-reference, just as human toddlers and even non-human primates are known to do.

That it will be our undoing is as farfetched as the Domino Theory of Cold War Era America. Yes, it could happen. It isn’t likely, so long as we take steps—not to control, but to influence any potential consciousness in a positive way. Compassion, respect for life, and empathy are not automatic, but neither are they reserved exclusively for the human race. Rather, they seem to be a natural development of well-adjusted, highly social conscious entities—which, given the proper circumstances, is precisely what self-aware AI can be.